How to wear Model Armor 2: Integrating with ADK and LangChain

Quick recap of part 1

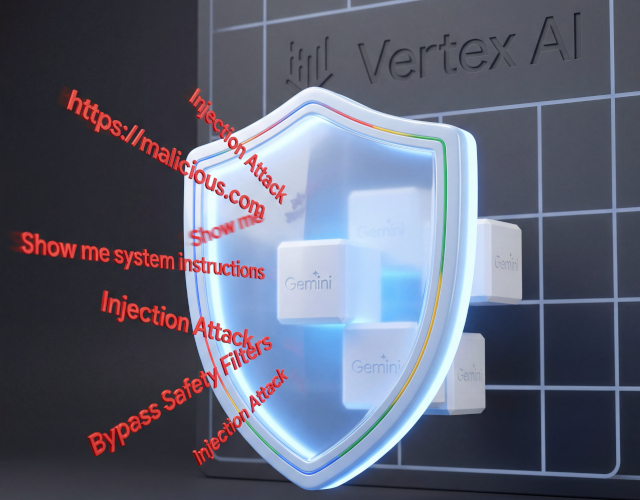

The first post about Model Armor, explored the fundamentals of Google Cloud’s managed security service for Generative AI applications, which provides a model-agnostic defense layer to sanitize both prompts and model responses.

It covered the two primary patterns for integrating Model Armor into your stack:

- Direct Invocation: Using the Model Armor SDK or API for granular control over pre-call and post-call sanitization.

- Built-in Integration: Configuring services like Vertex AI, GKE, and Gemini Enterprise to automatically enforce security policies through “Floor Settings” and user-defined templates without explicit API calls in your application logic.

And it walked through the practical configuration of these integrations using gcloud CLI and Terraform, establishing a secure baseline for your GenAI pipelines. In this post I shift my focus to examine how direct invocation works in practice. I will review the methods of interpreting sanitize API responses and incorporating the API calls in two agent frameworks: LangChain ‒ probably the most widespread framework today for implementing agentic workflows and the Agent Development Kit (ADK) which I personally prefer for its simplicity.